Planet-Navigating AI “Brain” Helps Drones and Cars Avoid Collisions

NASA Technology

Once you’ve designed a robot that can autonomously explore planetary terrains, putting the same technology in cars, toys, and drones seems almost easy. That’s why conducting NASA-funded research on deep-space computing was a natural fit for Boston-based startup Neurala.

“The use case was ideal for our long-term vision, which is that every device should have a brain,” says Massimiliano Versace, who founded Neurala with colleagues in the Cognitive and Neural Systems Department at Boston University. “The best way to do this is to force yourself into the most difficult situation and use the most ubiquitous and inexpensive processors and sensor packages on the market.”

The company’s core technology is an artificial intelligence (AI) “brain”—neural network software modeled on the human brain that can interact with and learn from its environment using ordinary cameras and sensors. The Neurala Brain can process its surroundings locally, so it doesn’t require a cloud-based supercomputer like other AI systems. That’s where the NASA research was especially crucial.

To put a self-learning robot on Mars, for instance, where communication with Earth can be delayed by as much as 25 minutes, “you need to rely only on the compute power that you have on board,” Versace explains. “You cannot ping a server. You don’t have GPS. You don’t want to send to Mars something that can break or fail or needs a lot of communication and exchanges with Earth.”

A system that can work under these most demanding conditions has far fewer hurdles on Earth.

The idea for Neurala came in 2005, when Versace and his colleagues Anatoly Gorshechnikov and Heather Ames realized that major developments in the latest graphics processing units weren’t just good for video game displays—they also had game-changing potential for artificial intelligence.

“The intuition we had back then was: what if each pixel that the graphic processor processes is treated like a neuron?” Versace recalls. The colleagues started using graphic cards to develop programs that operated more like brains, which can slowly compute a large amount of information in parallel—that is at one time—versus a traditional central processing unit, which can process many small pieces of information very fast but only serially, or one after the other.

An initial signal that this research could have more than a theoretical impact came in early 2009, when the team started working on the Defense Advanced Research Projects Agency (DARPA) SyNAPSE Project, which aimed to develop low-power computers inspired by biological neural systems—specifically, brains.

NASA got wind of the work in 2010, when Versace wrote about the DARPA research in IEEE Spectrum, a magazine published by the Institute of Electrical and Electronics Engineers. Mark Motter, an engineer at NASA’s Langley Research Center, read the article and immediately saw broad potential applications for the brain-inspired system.

“The high-level aspirational goal was to have computational capability and memory collocated so you don’t use up all the energy in the device by shipping data back and forth over a data bus,” recalls Motter. “That caught my interest.”

Technology Transfer

Motter reached out to the Neurala founders and eventually became the technical representative on the company’s Small Business Technology Transfer (STTR) contracts with Langley.

“The first phase of the STTR award was really focused on showing how a rover on Mars would learn to navigate within an unsupervised neuromorphic computing paradigm and find its way in an unfamiliar environment,” Motter says—neuromorphic meaning neural-like in operation.

At the time, exploring planetary surfaces still required a good deal of human control and power-hungry sensors for even basic functions. There was a sense that AI was the way forward, but it was still largely unproven. In fact, the technology was at a turning point. Less than a decade later, neural networks and deep learning appear ready to transform industries and replace old algorithms in tasks like object recognition and speech recognition.

Back in 2011, working with academic partners in Boston University’s Neuromorphics Lab, Neurala completed its STTR Phase I research before winning the larger Phase II contract, for which the company developed another key to what would soon become its commercial offerings: visual processors based on passive sensors to enable robots to identify and interact with objects and learn new terrain.

Motter, whose own PhD work also involved unsupervised learning and could be applied to unpiloted aerial vehicles, or drones, took particular interest in Neurala’s Phase II STTR research, because the visual processors had clear potential benefits on Earth. Yet another award from a NASA Center Innovation Fund allowed the Neurala team to help Motter develop technology to avert drone collisions.

Benefits

Following all that NASA work, Neurala was able in 2014 to raise $750,000 in private capital. The company then applied for and was awarded an additional $250,000 from NASA in STTR Phase II Enhancement money—a program that matches private funds to help commercialize STTR technology.

“This was very important for us, because it gave us a substantial amount of cash to pay for our development costs,” Versace says.

Neurala created several iOS and Android apps for consumer robots and drones like the Parrot Jumping Sumo ground robot and the DJI Phantom and Parrot Bebop drones. The company is also licensing the technology to consumer drone manufacturers Parrot and Teal, Versace says.

“We used these initial apps as proof of concept and almost as marketing materials,” Versace says. “In essence, they gave us the chance to do the real stuff by demonstrating with this low-cost application that we can deliver technology to the end user.”

The products have helped the company reach its real market: designers and manufacturers. By the time Neurala announced in December 2016 that it had raised $14 million in its series A financing round—on top of $2 million in seed funding—it already had contracts with several drone companies, industrial robot manufacturers, and a major automaker looking toward self-driving cars, where the ability to make calculations locally is vital.

“If you’re a drone flying 50 miles an hour, by the time you send video to the cloud where a program identifies a bird in your path and sends back information to steer, you’ve already hit the bird,” Versace says. “You want to be able to compute quickly on the device for safety reasons. Our technology enables us to cut the umbilical cord to the external world.”

The company’s main product, its Brains for Bots software development kit, enables app developers to incorporate the Neurala Brain into programs created for a variety of computing platforms used in drones, self-driving cars, industrial robots, smart cameras, toys, gadgets, mobile phones, and computers. The software can learn to identify, find, and track objects. Later iterations of the software will incorporate navigation and advanced collision avoidance.

In the case of smart household devices and toys, local computing prevents security and privacy problems, too. “It’s sort of an Internet of things without the Internet,” Versace says. “With the Internet of things, everything has to be connected with everything else. In reality, sometimes you don’t really need the Internet. Or you don’t want it. Or you can’t have it.”

Recent projects for Neurala include a partnership with Motorola to incorporate facial recognition into cameras worn by police and technology for drones used to detect poachers in Africa.

The Neurala Brain’s ability to function locally owes much to the Space Agency’s early support, Versace says.

“They gave us the money to do it, but also, they forced us to do it the right way,” he says. “They put very high and stringent demands on the project. It’s like they made us go to the gym and get strong in this project before we faced the real world.

“Without NASA, Neurala would not be the company that it is,” Versace says.

From left to right: Matt Luciw (Neurala), Mark Motter (Langley Research Center), and Massimiliano Versace (Neurala). Neurala developed and commercialized its neural-network software thanks in part to a series of STTR contracts with Langley.

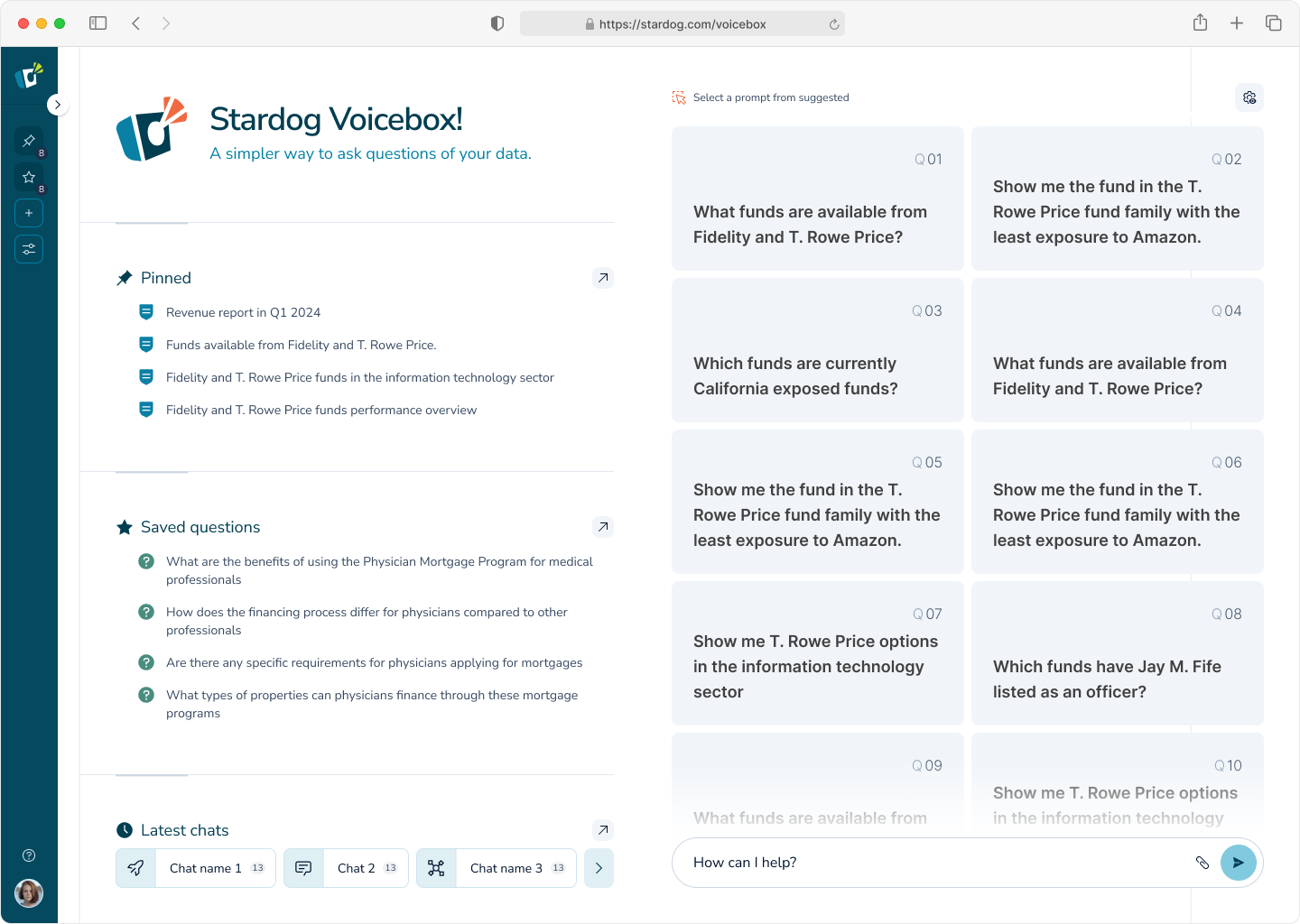

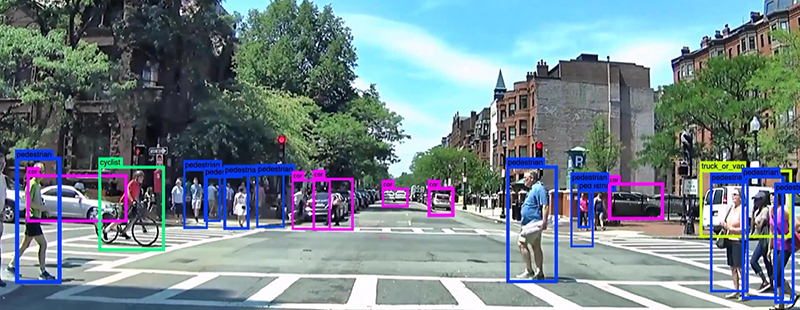

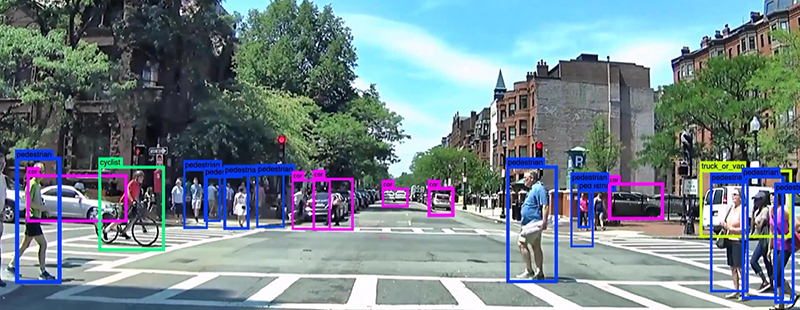

This screenshot shows a self-driving car’s view when powered by Neurala’s software. The vehicle can automatically identify pedestrians, cars, cyclists, trucks, and more on a Boston street in real time.