Fix It Like an Astronaut with Augmented Reality

When astronauts on the International Space Station venture outside to install new equipment or perform maintenance, dozens of people on Earth are involved. These extravehicular activities, or EVAs for short, are reviewed, practiced and supported with instructions from mission-control personnel. This kind of labor-intensive support for field staff is something few companies can afford, but NASA technology is making a new kind of on-demand virtual support available on Earth.

For work such as maintenance on HVAC equipment or repairs at a remote monitoring station, a technician typically follows established “best practices.” But mistakes or unusual circumstances not included in troubleshooting guides can slow productivity and cause costly problems.

Astronauts, like field crews, depend on predetermined instructions. And like those field crews, they sometimes encounter situations that aren’t addressed in the handbook. Especially when dealing with extremely delicate and complex technology, sometimes they need assistance directly from the engineers and technologists who built the system. Today, astronauts in low-Earth orbit can get that expertise through real-time audio and video feeds — but this kind of immediate contact won’t always be possible during deep-space exploration.

For example, astronauts on Mars will face days-long communication blackouts when the planet is on the other side of the Sun from Earth. That’s why NASA has been developing technology to enable astronauts to deal with unexpected situations on their own. Working with Houston-based Tietronix Software Inc., NASA developed a closed-loop system of procedural guidance using machine learning and artificial intelligence.

A closed-loop system is one that contains everything needed to operate and adapt without outside intervention, explains William R. Buras, chief science officer at Tietronix. “That means the system itself can make adjustments on its own.” While astronauts use the technology to guide themselves through tasks, the complicated neural network presents information and identifies ways to improve both guidance and task outcomes.

NASA on Your Shoulder

Over the years, the company has translated long experience in creating software and planning missions for NASA into tools to streamline work flows and automate processes across a variety of fields. The three founders were part of the team at Johnson Space Center that developed software for the space shuttle’s robotic arm. Other senior staff members also have NASA backgrounds, and the company’s long work with the space agency has often helped shape its products and services.

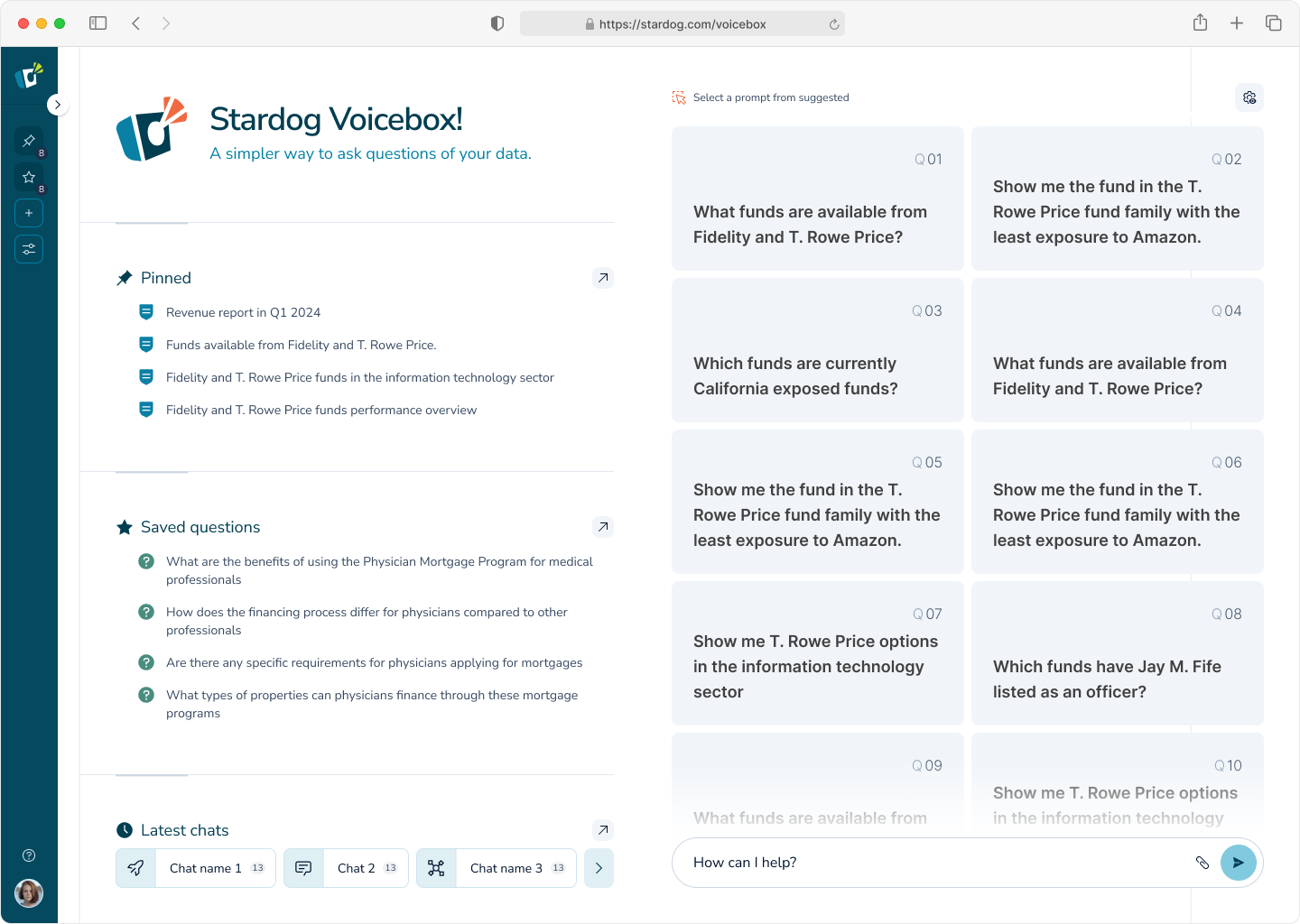

The work that ultimately led to Tietronix’s automated procedural guidance tool took place under multiple contracts with Johnson, including an Intelligence Automation System Services contract, over the course of more than 10 years. ProX is the name NASA ultimately gave to the technology that presents a digital display of step-by-step procedures to guide astronauts through tasks, eliminating the need for outside assistance.

In addition to replacing dozens of binders of printed instructions, this system can provide additional information via a web interface.

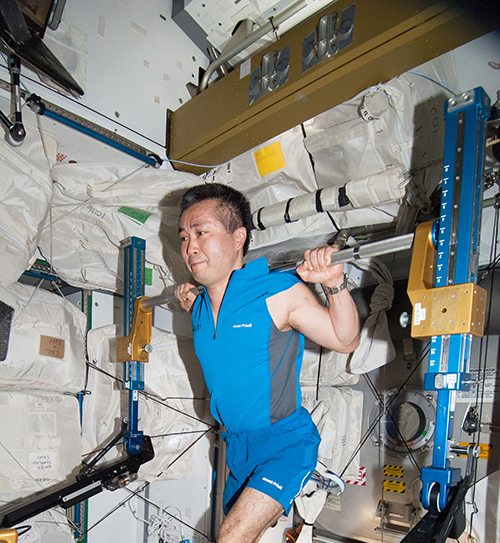

One application of the program is maintenance procedures for the space station’s Advanced Resistive Exercise Device (ARED). The machine uses resistance to help astronauts maintain muscle mass and bone strength in zero gravity. NASA demonstrated and validated ProX by running maintenance procedures on the duplicate ARED on Earth, proving the program worked as designed.

“Some of the things we are asking astronauts to do are very, very hazardous, and an imperfect execution of the procedure could result in serious impairment of a mission or loss of crew,” says Buras.

Building on the initial technology, Tietronix again worked with NASA to add 3D animation, a virtual environment and presentation of all of the features using an augmented reality (AR) display. While NASA uses Microsoft HoloLens, the company designed the software to work with any AR device.

From the foundation of ProX, the company developed Procedure Genius, or ProG. It was first made available in 2016 to help improve staff performance in the private sector. “The idea is that ProG can monitor activity in real time,” explains Buras. “Say you have two buttons, one green and one red. The instructions are to touch the green button, but the technician touched the red button. The system can detect that the wrong button was pushed.”

Unlike simple telemetry from the hardware, the system uses sophisticated algorithms to recognize the mistake, determine how to correct it and direct the remediation.

“Instead of going to the next step, it’ll say, ‘Wait up, you were supposed to push the green button. Now you need to do this to correct it.’ So, it's almost like having an expert or a mentor on your shoulder,” says Buras.

The visual display can superimpose text, animation or both over the equipment for each step. All the while, a visual record confirms the successful execution and completion of the work. At the end of the task, the imagery can be sent back to the office as part of a service report.

The system can also be used for hands-on training for new staff or helping seasoned professionals advance their skills in the field instead of a classroom.

EVAs on Earth

Exhausted astronauts can make dangerous mistakes. Buras says the system can help with procedures to support tasks during space travel and while living on the surface of another planet or moon.

The same support is possible at remote sites on Earth, says Frank Hughes, Tietronix president. A former NASA employee with over 30 years of experience training astronauts at Johnson, he knows how valuable this virtual assistance can be.

“Let's say you're out in the field, far away from civilization,” says Hughes. “You’re almost like an astronaut doing an EVA. You could be in Kazakhstan or Antarctica, and you’ll be able to get the job done quickly.”

ProG can also make a call via an internet connection if unusual circumstances occur.

The construction industry uses ProG in the Trimble XR10 hard hat, which has a HoloLens 2 attached. Workers can view various stages of the building process, such as superimposing ductwork that will be added after all the beams are in place. A truck-engine manufacturer uses the same software to ensure all the varied configurations are assembled correctly.

“For example, if you're buying this truck and it's going to live in Minneapolis, then you have to have a heater in the oil pan to make sure it doesn't freeze up. If you buy that same truck for a company down here in Texas, you don't need that piece of gear,” say Hughes.

The inspector looks at the engine through the HoloLens with a superimposed image of the correct engine specification, helping to quickly identify any extra or missing components.

Dr. Astronaut

Tietronix adapted the system for the oil and gas industry and sees potential use in just about any workflow. NASA, too, is looking for more ways to leverage the technology.

One possibility could be supporting delicate medical procedures on long-duration missions — NASA has funded that development with additional SBIR contracts. The updated version called Visual Ultrasound Learning, Control and Analysis Network (VULCAN) is already finding use on the ground, by providing visual cues for a sonographer to follow ultrasound scanning procedures.

“We're refining the technology for Houston Methodist Hospital’s ultrasound methods and needs in order to prove that it’s effective at enhancing the quality of their ultrasound studies,” says Buras. Using mixed-reality procedural guidance to improve technicians’ accuracy could greatly reduce the number of inaccurate or incomplete reports that need to be redone before a diagnosis can be made.

Japanese Aerospace Exploration Agency astronaut Koichi Wakata works out on the Advanced Resistive Exercise Device (ARED) on the International Space Station. Maintenance procedures for the ARED have been digitized to work with the ProX virtual reality guidance technology developed by Tietronix Software Inc. Credit: NASA

Maintenance on the ARED exercise machine on the International Space Station can now be performed using the Microsoft HoloLens. It can display step-by-step instructions and 3D animation to walk astronauts through routine maintenance and repair procedures. A simulation of the equipment, as seen here, also provides a virtual training environment to review procedures. Credit: Tietronix Software Inc.

Mixed reality combines augmented reality with data and animation to superimpose text, graphics or both onto actual objects and environments. Tietronix Software Inc. collaborates with Microsoft HoloLens, now used in the Trimble hard hat for construction project support. Credit: Trimble Inc.